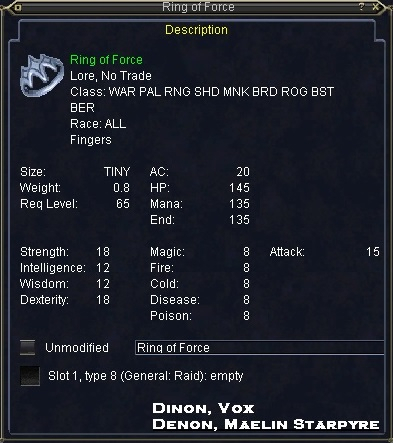

Ring of Force

|

[Drops | Comments ]

DropsThis item is found on creatures.Plane of Time B

|

||||||||||||||||||||||||||

|

|

|||||||||||||||||||||||||||

| Send a correction | |||||||||||||||||||||||||||

9 posts

berserkers can wear too

7 posts

Only 360caps out there are for casters with AA abilitys

31 posts

ring drops off Anar of Water in tier 1 trials...

5 posts

Nah, drops randomly off any named in Tier 1 trials. Just like any other Tier 1-3 loot that is.

by the time u get to time u should have a good set of elemental, melee ele caps worn attack at 1600 i do beleive, need buffs to get past that , time loot is just about the hp IMO

3 posts

Ya know, whats SoE gonna do when a bunch of toons get alot of gear like this? I mean really, stats cap at 305. With about 6 items of this caliber, you could be sitting above that in a few stats unbuffed......What then?

#REDACTED,

Posted: Oct 09 2003 at 8:53 AM, Rating: Sub-Default, (Expand Post) AA abilities raise the cap to 360 I believe.

39 posts

GREAT ring... I'm jealous :). but I think that the effect could be better. yeah, I know that attack is REALLY important... but only 15? on something that drops from Plane of time?... seems like 50 attack or something would be more fitting.

#REDACTED,

Posted: Jun 02 2003 at 3:30 PM, Rating: Sub-Default, (Expand Post) I know, that's what I'm saying. WTF... this is the pane of time... if a ring with only one effect is going to drop and that effect is something like Vengeance then for the love of god make it Vengeance X. Nice, but still on the newbie side of godliness.

#REDACTED,

Posted: May 30 2003 at 11:30 PM, Rating: Sub-Default, (Expand Post) I got this ring last night it's not bad I like the Levitation effect that vengence III gives. it's really cool to always be flying.

#REDACTED,

Posted: Aug 21 2003 at 10:19 AM, Rating: Sub-Default, (Expand Post) I want 60 billion plat too... If I had that much i could fix California.

30 posts

your a moron, and i mean that in a very caring way.

#REDACTED,

Posted: May 31 2003 at 12:10 AM, Rating: Sub-Default, (Expand Post) You shouldn't insult what other people say, especially when you cannot use proper grammar.

Does not change the fact that he is correct.

Tephlonb

55 Preserver

Cazic Thule

Tephlonb

55 Preserver

Cazic Thule

Free account required to post

You must log in or create an account to post messages.© 2024 Fanbyte LLC